It’s Friday. And my summer is officially ending as we wrap up a weeklong beach vacation. I hope your summer (or winter to my Aussie readers who are also hosting the 2023 FIFA Women’s World Cup) is going great.

As always there is a lot of info packed into this edition - I hope you find the time to dig into it and enjoy.

In today’s Brainyacts:

Newsletter format update (let me know if you like)

Is it time for an AI Constitutional Convention?

A major OpenAI update rolls out

Comprehensive coverage of leading AI model updates

News you can use (links to click if the headline grabs you)

👋 to new subscribers!

To read previous editions, click here.

The Lead Story

AI's Coming Constitutional Convention

For centuries, constitutional conventions have been pivotal moments in history for codifying the rights and responsibilities that shape civilized societies. As artificial intelligence rapidly grows more powerful, the AI community faces a similar historic inflection point. The time has come to draft a "Constitutional Convention for AI" - to proactively encode principles that will steer these transformative technologies toward justice, empowerment, and human flourishing.

AI promises immense benefits, from curing diseases to unlocking clean energy. But uncontrolled, it poses existential dangers that could undermine human autonomy and dignity. Lawyers understand well that mere regulations or oversight are no match for a determined bad actor. Fundamental principles must be woven into the very fabric of the system.

Anthropic's "constitutional AI" approach, discusses below, shows promise to become AI's James Madison. Their blueprint enshrines human values like transparency, impartiality and avoidance of harm within the foundational architecture of AI systems. Of course, progress will require surmounting profound technical and philosophical challenges. AI does not naturally comprehend ethics - its tendencies must be shaped through a combination of great expertise and great care.

We must embrace this constitutional moment. AI architects across industry and academia should convene a "con-con" to debate and ratify AI's first principles.

The legal community, with its depth of knowledge on constructing just societies, must help guide this process. Together, we can plant the seeds of AI's ethical enlightenment - and write the timeless documents that future generations will thank us for creating during AI's embryonic days. The task is monumental, but so too is the opportunity to proactively shape how AI alters the human condition.

Anthropic’s “Constitutional AI” Approach

Anthropic, an AI safety research company, has proposed an approach called "constitutional AI" to help address these concerns. They recently released a free public version of their model, Claude 2.

The key idea behind constitutional AI is designing AI systems with built-in principles and constraints that align the system's goals and behaviors with human values. Just as constitutions in human governments encode principles like free speech or due process, Anthropic aims to define constitutional rules and values for AIs.

For example, an AI assistant with constitutional AI could be designed with transparency rules requiring it to always explain its reasoning, or with privacy protections preventing it from sharing user data without permission. Additional constitutional principles might require AI systems to respect human autonomy, avoid manipulative or deceptive tactics, or minimize harmful unintended consequences of their actions.

Encoding such principles directly into an AI's core architecture is intended to make its alignment with human values a fundamental part of how it operates. This contrasts with more ad-hoc or external alignment approaches. The constitutions are designed to ensure beneficial behaviors even as AI systems become more advanced and autonomous.

Anthropic develops constitutional AI through technical research on topics like machine learning theory, AI safety techniques, and AI self-monitoring. They draw on insights from law, philosophy, and social science to inform the constitutional rulesets. Ongoing research also focuses on mechanisms to allow updating the constitutional principles over time as capabilities and social norms evolve.

Here are some potential benefits and risks of a Constitutional AI approach:

Benefits:

Provides a systematic framework for aligning AI goals and behaviors with human values. This could help address concerns about AI safety.

Constitutional principles could create trust by making AI systems more transparent, traceable, and provably beneficial.

May allow the development of more advanced AI capabilities if the constitutional guardrails provide sufficient safety assurance.

Having alignment mechanisms built-in by design could be more robust than external observation or oversight.

Can draw on vast experience encoding principles and constraints in legal constitutions.

Risks:

Defining comprehensive constitutional principles that fully encode human values is extremely challenging. Important values or edge cases could be overlooked.

Hard to amend constitutions, so may be difficult to update principles to accommodate unforeseen circumstances.

Constitutional constraints could overly limit beneficial uses of AI if not designed carefully.

No governance structure in place to adjudicate unexpected conflicts between constitutional principles.

Constitutional review processes to verify AI system compliance need to be robust and tamper-proof.

Requires extensive research to develop technical and philosophical foundations for constitutional frameworks. Success is not guaranteed.

Spotlight Update

䷓ 🔑 OpenAI Releases Custom Instructions

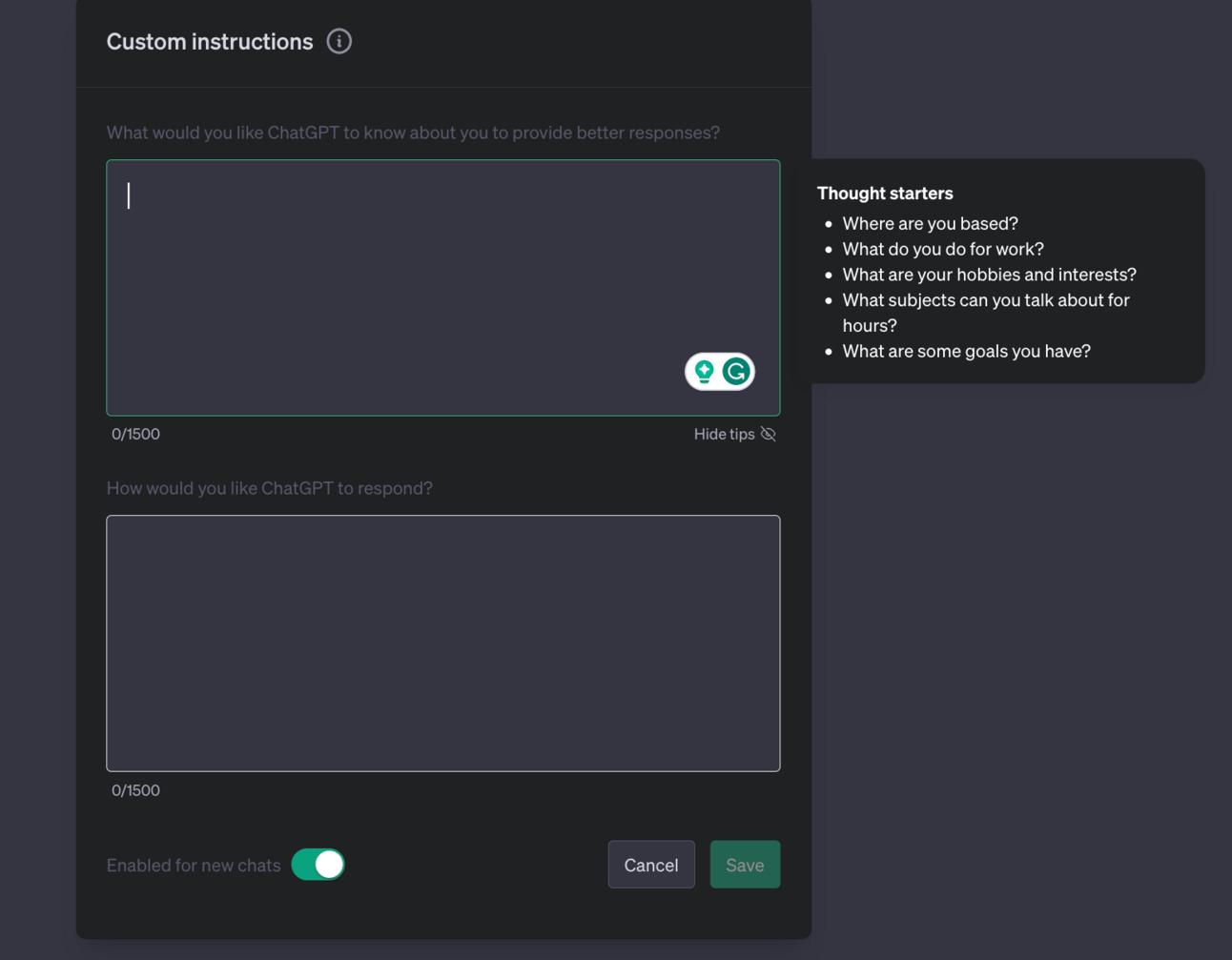

OpenAI just saved us all a ton of time! If you are a Plus subscriber, you now have a way of personalizing your ChatGPT experience. No more wasting time telling ChatGPT who you are or how you want it to respond (two key methods of high-performance prompting). Now you can embed this information directly into ChatGPT settings so it remembers and considers it in every one of its responses.

Key Points:

Custom instructions let you share anything you'd like ChatGPT to consider in its response similar to including information in your prompt

Only Plus plan subscribers have access (coming soon to free users)

Not yet available in the UK and EU

Your customer instruction may be incorporated into some plugins allowing for a more personalized experience

These will be used to help train OpenAI’s model

Why This Matters: Offering free consumer-grade tools has been a key part of widespread generative AI adoption and the leading success factor for OpenAI specifically. We also know that it is super expensive to run and offer these models for OpenAI! They will need to monetize or recoup investment somewhere. By offering personalization in this manner, they will gain highly valuable insight into their users. How might this be used to benefit users and exploit them? Users of Gmail know that it is a great tool but that much of its utility is born out of the fact that Google “reads” or knows what is in each email. We, consumers, make a trade-off - more utility for less privacy. We are heading down similar paths here.

What are some benefits of this feature?

Enhanced User Experience: With the introduction of custom instructions, users can get more tailored and relevant responses. It caters to individual needs and preferences, thereby enhancing user experience and satisfaction.

Time Efficiency: By eliminating the need to repeatedly provide contextual information in every conversation, users can save a significant amount of time. This means users can get more done in less time, making the tool more productive.

Better Understanding of AI: The incorporation of custom instructions into user settings will allow users to learn more about the capabilities and limitations of AI technology. This can foster a greater appreciation for what AI can and cannot do.

What are some risks of this feature?

Filter Bubble: By using an AI that adapts to a user's preferences and instruction, there's a risk of creating a 'filter bubble' – a situation in which the AI becomes overly specialized in a particular user's interests or viewpoints, thereby filtering out diverse or contrary information. This can potentially limit exposure to differing views and ideas, leading to a narrow worldview.

Increased Data Privacy Concerns: With more personal information being shared for a more personalized experience, data privacy becomes a concern. Users are at risk if their data is not properly protected and secured. If the data were to be mishandled, it could lead to serious privacy breaches.

Dependence on AI: With an increasingly tailored experience, there might be a risk of users becoming overly reliant on AI, which could decrease creativity, independent thinking, and problem-solving abilities.

How to access this feature?

You can read more here.

Remember, you need to be a Plus subscriber.

Go to your settings by clicking on the three dots (…) next to your name in the bottom left-hand corner.

Click on Settings & Beta

Click on Beta Features

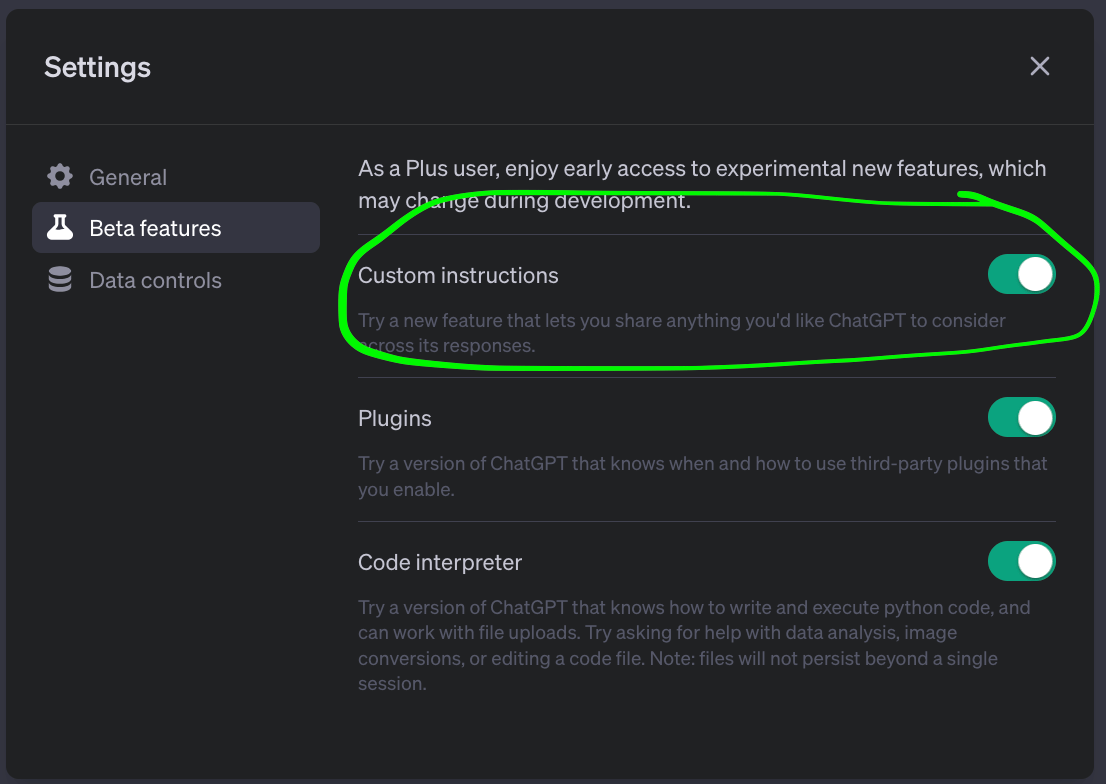

Turn on “Custom instructions” - see pic below

Close out this window

You may need to reload/refresh your browser window to ensure the changes take effect.

Return to the three dots (…) next to your name - you will now see Custom Instructions in the menu.

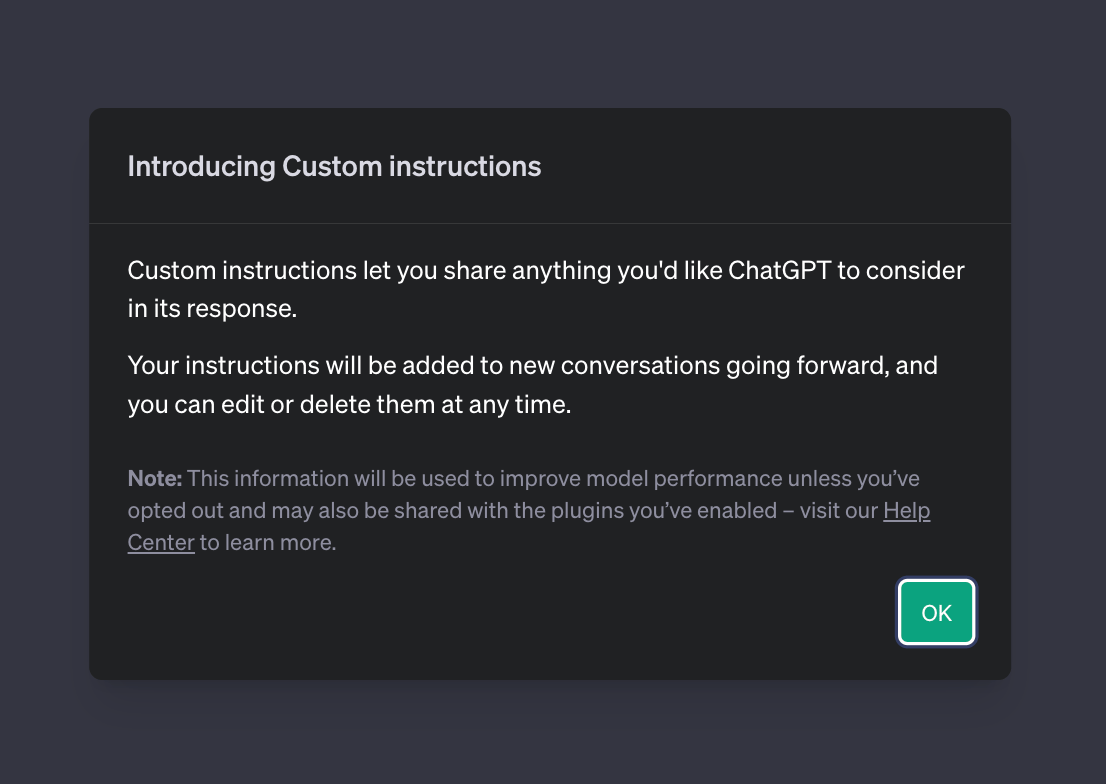

When you click on them the first time, this is what will appear 👇.

Click OK, and work through the rest of the instructions.

Notables

Apple has its own AI model called Ajax. But they still don’t know how to commercialize it. It is built on Google’s Jax, a machine-learning framework. Is Siri the obvious choice or will Apple create a new product or service? Rumor has it an “AppleGPT” exists and will be launched next year.

OpenAI vastly outpaces other LLMs in funding. This is in large part due to Microsoft’s $10B investment at the beginning of the year. Google is the lead investor in Anthropic, a rising competitor to OpenAI. Are IPO’s and acquisitions looming?

Does AI spell the end of “artful news writing?” Google is pitching Genesis, an AI news writing tool, to the likes of The New York Times, The Washington Post, and the News Corp. (owner of the WSJ).

OpenAI is doubling the number of messages ChatGPT Plus customers can send to GPT-4. Rolling out over the next week, new limit will be 50 per 3 hours.

Microsoft’s Bing Chat comes to the enterprise. Microsoft launched Bing Chat Enterprise with enhanced data privacy, ensuring no chat data retention, no access to customer employee or business data, and no use of customer data for AI model training.

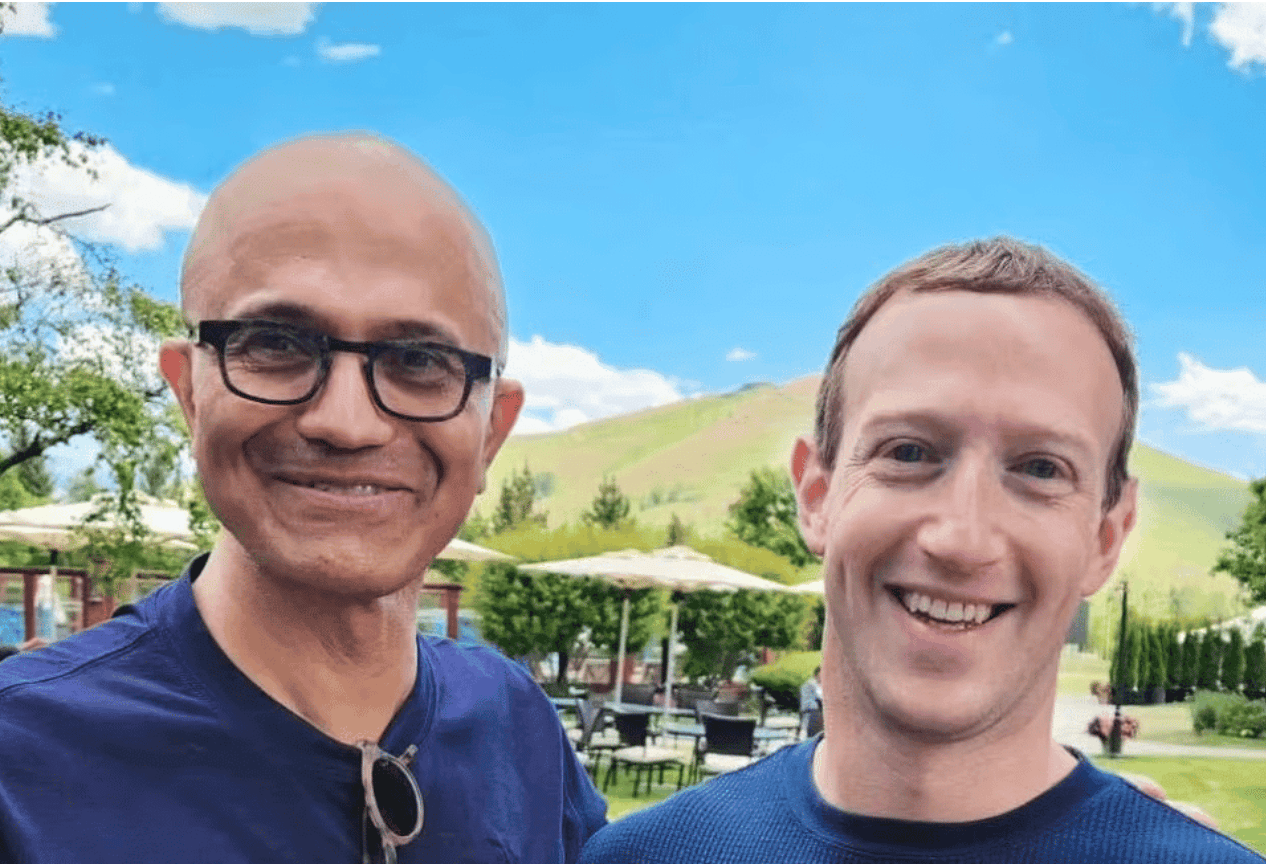

Meta & Microsoft Llama2. Microsoft and Meta strengthen their partnership, with Microsoft as the preferred Llama 2 partner. Access is open to tech, academia, and policy enthusiasts supporting open AI innovation. This is a new open-source language model, free for research and commercial use. Access the model here.

Was this newsletter useful? Help me to improve!

DISCLAIMER: None of this is legal advice. This newsletter is strictly educational and is not legal advice or a solicitation to buy or sell any assets or to make any legal decisions. Please /be careful and do your own research.8